This post is part 2 of a 382-part series on how to manage database changes, primarily for SQL Server, starting here.

I figured this would be a good week to discuss ways that you can make your world a better place by making small changes to things you do in your everyday work. No, this post is not about inconvenient truths or sustainability or hybrids or manbearpig. This post is about the importance of local development databases.

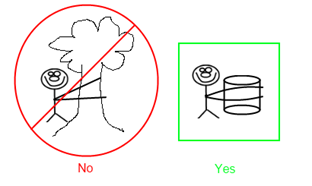

The situation you see all too often is that a development team has a single database server in their development environment that they share, and everyone is developing application code locally while simultaneously making changes to a shared database instance. Bad, bad, bad.

Captain Obvious

These days, most everyone develops their code locally. That’s just want you do. Many developers have learned the hard way that this is important, and won’t tolerate any divergence. And for the less experienced developers who are doing it just because they are told to, eventually they will make the same mistakes and learn from them too. This is such an easy lesson to learn that you don’t see too many people violate it intentionally.

Even if you HAVE to develop on a server environment, you’ll probably at least find a way to isolate yourself. For example, SharePoint developers don’t tend to install the SharePoint platform on their local machines, mostly because it requires a server OS, but also because SharePoint is pure, unadulterated evil that will steal the very soul of any machine it comes into contact with. Nonetheless, in those cases where a local machine is not practical, the developer will install the SharePoint software onto a virtual machine so that they can still work in isolation.

This local development approach is critically important to any form of version control or change management. For all practical purposes, developers must have a workable environment that they can fully control and work in peace. From there, developers check their code into source control, and hopefully it gets built from source control before being deployed to another server. This gives each developer a degree of control over how much the other developers can screw them up, and more importantly it ensures that every change is traceable back to a date and time and person responsible.

This approach is so ingrained in so many developers, that often we take it for granted. Just try to remind yourself regularly how awful it was that time that everyone was working directly on the same developer server, and nobody can keep track of who changed what when. Or better yet, how fired up everyone got the last time somebody sneaked directly into the production server and started mucking around.

The database is like the village bicycle

I am consistently surprised how often developers go to so much trouble to isolate their local application development environment, and then point their local application code to a shared development database server that the whole team is working on.

If you never need to make database changes, and nobody on your team needs to make database changes, this can certainly work. In that case, the database behaves like a third party service, rather than an actively developed part of the system.

However, if you are ever making database changes, you need to isolate your database for the exact same reasons that you need to isolate your application code.

Imagine that you working on a project that involves several layers of DLLs communicating with each other. Because you are in active development, you and your team are constantly making changes that affect the interfaces between those DLLs. The result in is that you continually need to check in your changes in a whole batches; you can’t just check in a few files here and there because you will be breaking the interfaces for anyone else working in that code.

The same rules must apply to the databases as well, for all of the same reasons. At any given point in time, anyone should be able to pull the code that is in source control, build it, and run it. However, if I’m making a series of changes to my local code and the shared development database, my crazy C# changes are isolated on my local machine, but coworkers are getting my database changes as they happen, so their systems will stop working all of the sudden, and they won’t even know why, or worse yet they will know exactly why and I’ll be the guy “who busted everything up.”

Better yet, after a few days of wasting time on a bad design, I give up on it, and with one or two clicks I can undo all of my code changes and roll back to the main development code stream. However, there is no one-click rollback to the database schema, and so now those changes need to be manually backed out. Hopefully I kept a good list of the changes so I can do this without missing anything, but we all know that a few things will get missed, and now the development database becomes even more of a mutant branch of the true database schema, full of changes that nobody remember or owns, and it is all going to blow up and make us all look like fools when we are rushing to deploy it into QA next month.

DVCS isn’t all bad

Distributed Version Control Systems like Git and Mercurial are the fun new fad in version control, and everyone seems to think that they are so much cooler than more traditional and linear systems like Vault. To me, it seems to grossly overcomplicate an already difficult issue by exacerbating the most problematic concepts, namely branching and merging. But I’m a crusty old conservative who scoffs at anything new, so maybe (and even hopefully) I’m wrong. I was quick to dismiss it as a new toy of bored astronauts, but some people at lot smarter than me have done the same and seem to be coming around to it, if not embracing it completely, so I will continue to believe that I am right for now, even though I know I’m probably wrong and will change my mind eventually.

But there is one idea in DVCS systems that I can get on board with, and that’s the idea that everyone is working in their own branch. As we’ve discussed, you simply cannot be working in the same sandbox as everyone else, or you will have intractable chaos. You should stay plugged into what everyone else is doing on a regular basis, usually through version control, but you must also isolate yourself, and you must do so thoroughly.

And here’s the thing (and this may very well be the idea that eventually opens my path to DVCS enlightenment): your local machine is branch. Granted, it is not a very robust branch, because it only has two states (your current state and the latest thing in source control), but you are still essentially branched until you check in, in which case you will have to merge. It might be a really small merge, because the changes were small or backwards compatible, or because you were not branched off locally for that long, or you are the only one working on a feature, or because you’ve been communicating with the rest of your team, or because you are the only person who actually does any work, but you are merging nonetheless.

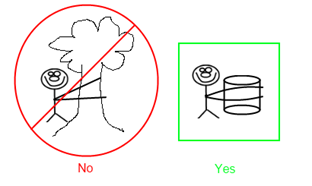

What does this have to do with databases? Branching is all about isolation. You must isolate your development environment, and you must so thoroughly. If you think of your machine as simply a branch of the source code, it crystallizes the idea that everything you are doing locally is a full stream of code, and it must contain everything needed to run that code, and must represent all of the changes in that code, including the database. In a broader view, if you were to branch your code to represent a release or a patch or feature, you obviously should be branching your database code at the same time (assuming of course that your database is under version control). If that is the case, and if the code on your local machine is nothing more than a primitive branch of what is in source control, then your local machine should also have its own copy of the database.

Database scripting is hard, let’s go shopping!

I know. This makes pushing your code to the development server more difficult, because you have to script everything out, and honestly the last thing I really want to do is complicate the lives of developers. In fact, I think the primary purpose of most development processes should be to reduce friction, and to make a developer’s life as worry-free as possible, so that they can focus on the real complicated business problems they are paid to solve, not the silly process crap that people invent to make themselves appear smart and organized.

That being the case, it may make your life a little harder to develop locally, and then write up all of the scripts necessary to push those changes to the dev server, but it is definitely worth it. This is not a theoretical improvement that will hopefully save you time in the distant future, when design patters rule and everybody’s tasks are on index cards and you’ve achieved 100% code coverage in your unit tests. No, this is a real, tangible, and immediate benefit, because you will save yourself effort when you deploy it to the next stage, namely QA or production. At that point, you’ll already have everything organized and listed out, and you did so when you were still working on the code and everything is still fresh in your mind. In my humble opinion, this is a much more maintainable process than everyone just trashing around in a wild-west development database, and then after it all spending days trying figure out which schema differences need to be included to release which features, because the change of getting that right consistently are almost non-existent.

And if this is really too much work for you to do well, maybe we can find you a ball to bounce with instead. Or maybe some UML diagrams and crayons to draw with. Either way, get the hell out of my code.

Beating a dead horse

Hopefully I’ve convinced you that you should have your own copy of the database for you local development. I could go on forever giving locally reasons for this. Or, I could give specific examples, like a recent client that did not follow this pattern, and our team was constantly breaking each other because the code and database was out of sync, even though we were just a small team of 3 experienced and practical developers sitting in a single office right next to each other, working together well and communicating all day, but the lack of database isolation made the issues unavoidable.

So yeah, I could go on and on. But I won’t, because it’s getting boring. If you’re agreeing with me by now, feel free to go read something else.

I can’t run SQL Server locally, my machine sucks!

I’ve heard a lot of people say that they can’t run SQL Server locally, and sometimes they are right, but I think a lot of the time it is an excuse.

Maybe you don’t have a license to SQL Server. That’s usually fine, SQL Server Express Edition is free. Sure, it has some limitations, like the lack of a SQL profiler, but there are great free tools out there like the one from AnjLab. And if you still need a full-featured copy, the developer edition costs less than $50. Can you or your company not spare $50 for something like that? Really?

Or maybe your machine doesn’t have enough memory. It’s true, SQL will eat up memory like nobody’s business and if you have Visual Studio 2008 and Outlook 2007 running, it can be pretty heavy. But I’ve found that as long as you have 3 or 4 GB of RAM, it works pretty well, and doesn’t everyone have that these days? Sure, a lot of you are stuck with crappy old machines that your employer gave you because he considers you to be a high-priced janitor, and he can’t justify in his mind spending a few hundred extra to make help you be more productive, but in that case you have bigger problems than anything we’re going to solve here. I would say, if possible, you should even shell out a few hundred and get some more memory for your machine, even if it’s a work machine and they won’t reimburse you for it. I know plenty of people who would be opposed to this just out of principle, but those people and their principles can go have their own little pity party and see who comes; in the meantime I’d rather solve the problem and move on.

Too Beaucoup?

However there is certainly one potential problem that can be difficult to overcome. What if your existing database is just too damn big to run locally?

One recent client had a production database which was used for a million unrelated purposes, and it was 30GB. Another recent client had the vast majority of their of their business data spread across two databases that were each about 300 GB. Sometimes, the database is just too big to copy down to your local machine. There are a few ways to deal with the problem.

Sometimes the best option is to separate the schema and the data. Strip down the data, get rid of the 300 GB, and get the minimum amount of sample data necessary to run your applications. Maybe clear it out entirely, and have some scripts or automated tests that generate a batch of sample data. Often times this will require a lot of analysis to determine what is necessary, and what all of the data is being used for, but that’s not an entirely bad thing. If you get a good and portable development database out of it, while also getting a better understanding of how the data is being used, then that has a lot of benefits. Granted, this is not easy by any stretch, but it may be doable. It all depends on your situation.

Another option is to setup a single high-powered development database server, and give each developer their own instance of the database on the server. This approach can have its own problems as well, such as people getting confused about which database instance belongs to who, and having enough disk space to store the inevitable terabytes of data.

So if you have any of these problems, my sympathies to you, and I hope that you can find a workable solution.

The End

So hopefully you are now inspired to change your process at little bit, or just entertained for a little while.

Or, if you’ve been trying to do the same thing but aren’t getting the buy-in you need, you have a little more reference material for saying “I read an article about this on the interwebs, and some guy said…”. It’s one reason I make these things so long, as a favor to you, because the person you are arguing with isn’t actually going to read all of this crap, so maybe they will just give up and let you do whatever you want. The person that wins the argument usually isn’t the person who is right, it’s usually the person who is willing to waste the most time arguing about it.

Good luck!